Haocheng Wang (王浩丞)

I am a PhD student at HKUST(GZ) and a Visiting Researcher at ETH Zürich, focusing on formal mathematical reasoning and autoformalization.

日拱一卒 · Keep bounding the rock

I am a PhD student at HKUST(GZ) and a Visiting Researcher at ETH Zürich, focusing on formal mathematical reasoning and autoformalization.

日拱一卒 · Keep bounding the rock

I am a direct doctoral student at DSA Thrust, HKUST(GZ) supervised by Prof. Zhijiang Guo. Currently, I am a Visiting Researcher at ETH Zürich (D-INFK), working with Prof. Rasmus Kyng and Dr. Sorrachai Yingchareonthawornchai on verifiable autoformalization and formal reasoning in Theoretical Computer Science. Previously, I was a Student Researcher at ByteDance Seed and DeepSeek AI.

I graduated with a Bachelor's degree (Honours) in Mathematics and Applied Mathematics from Xiamen University in 2025. My undergraduate thesis focused on formalizing Auction Theory in Lean 4 with Mathlib4, supervised by Prof. Ma Jiajun.

My research focuses on:

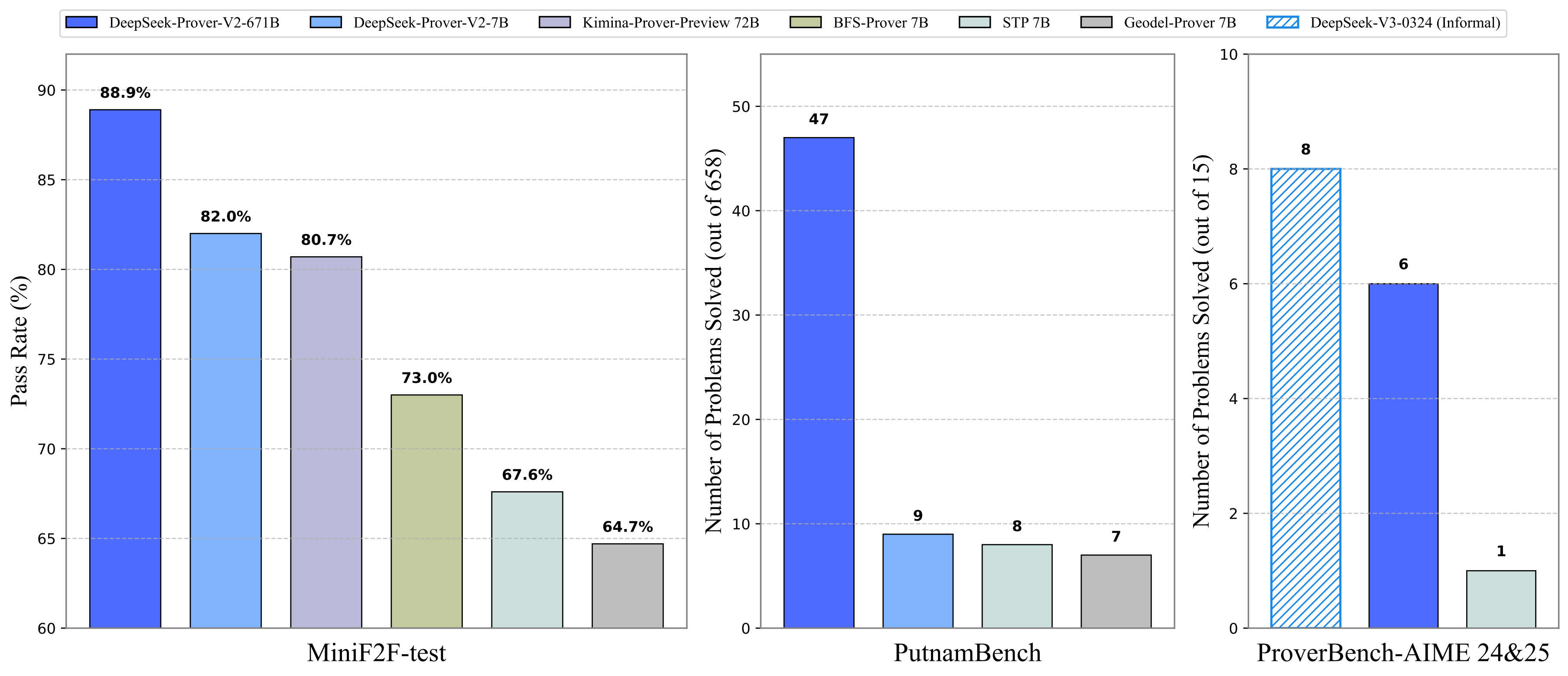

DeepSeek-Prover-V2, a 671B-sized model achieving SOTA results on miniF2F (88.9%) and PutnamBench (47 out of 658). Our approach combines reinforcement learning with subgoal decomposition to enhance formal mathematical reasoning capabilities.

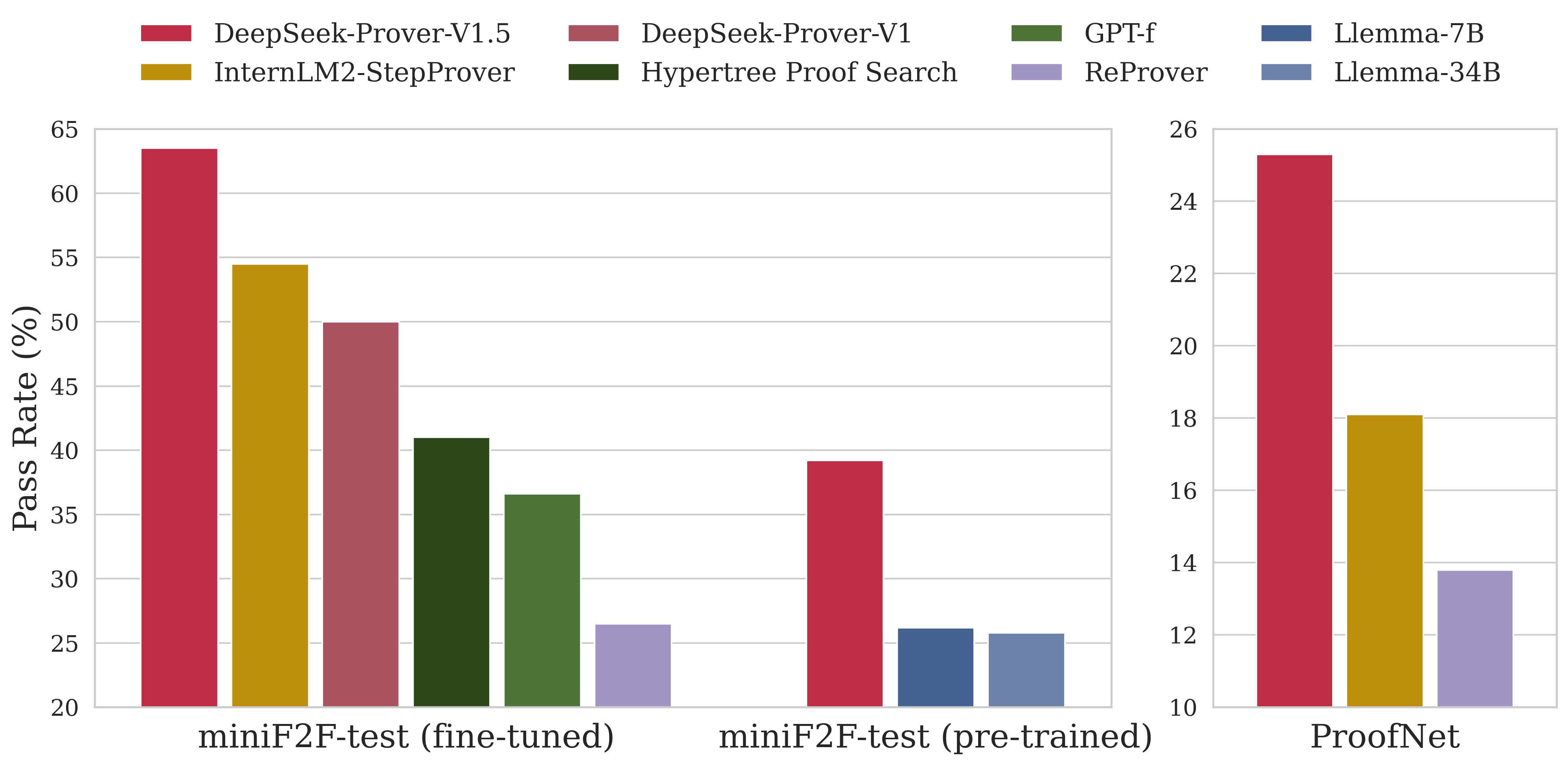

A novel hybrid approach combining LLMs and Monte-Carlo tree search for automated theorem proving. Achieved SOTA results on miniF2F (63.5%) and ProofNet (25.3%).

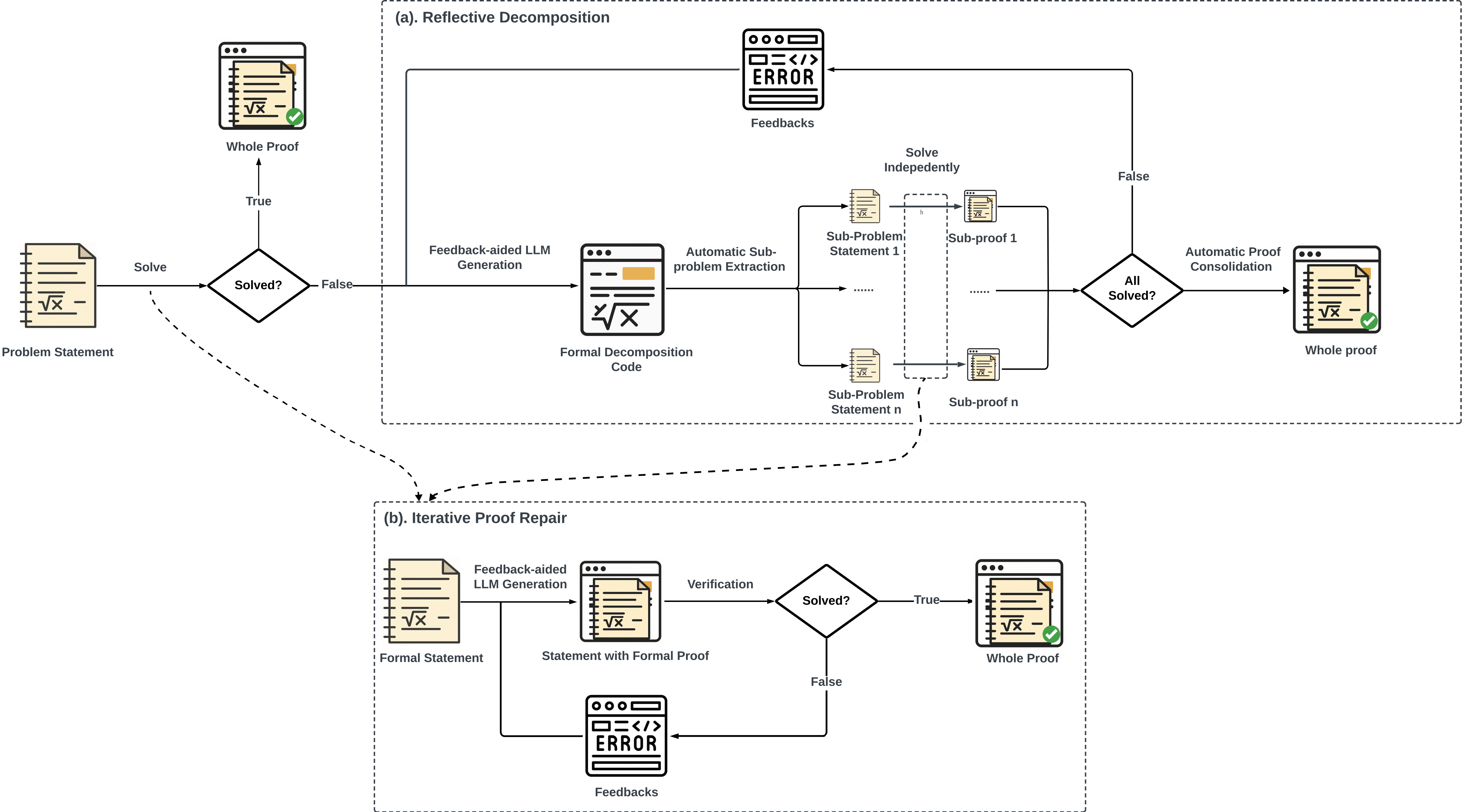

An agent-based training-free framework that enables general-purpose Large Language Models (gLLMs) to solve complex formal proofs in Lean 4.

Sep 2020 - Jun 2025

Bachelor of Science in Mathematics and Applied Mathematics (Honours)

Final Thesis: Formalization of Auction Theory using Lean 4 and Mathlib4

[GitHub]

Feel free to reach out to me via email at haocheng.wang [at] inf.ethz.ch or connect with me on GitHub.